According to a recent announcement on Apple's newsroom, two of the world's largest technology companies are partnering up to "assist" the global fight against the spread of the COVID-19 pandemic.

Here is the announcement:

In May, Google and Apple will launch a solution which includes application programming interfaces (APIs) and operating system technology to assist in contact tracing. For those of you that aren't aware, here is an explanation of an API:

"Think of an API like a menu in a restaurant. The menu provides a list of dishes you can order, along with a description of each dish. When you specify what menu items you want, the restaurant’s kitchen does the work and provides you with some finished dishes. You don’t know exactly how the restaurant prepares that food, and you don’t really need to.

Similarly, an API lists a bunch of operations that developers can use, along with a description of what they do. The developer doesn’t necessarily need to know how, for example, an operating system builds and presents a “Save As” dialog box. They just need to know that it’s available for use in their app.

This isn’t a perfect metaphor, as developers may have to provide their own data to the API to get the results, so perhaps it’s more like a fancy restaurant where you can provide some of your own ingredients the kitchen will work with.

But it’s broadly accurate. APIs allow developers to save time by taking advantage of a platform’s implementation to do the nitty-gritty work. This helps reduce the amount of code developers need to create, and also helps create more consistency across apps for the same platform. APIs can control access to hardware and software resources."

By providing APIs, Apple and Google will make it easier for developers to create applications that can be used to trace contacts.

I found three parts of this press release interesting:

1.) Apple and Google plan to "maintain strong protections around user privacy."

2.) users will have a choice on whether they opt in or not.

3.) privacy, transparency and consent are of "utmost importance".

Interestingly, despite the efforts to preserve user privacy, the app will "enable interaction with a broader ecosystem of apps and government health authorities" using wireless technology (i.e. Bluetooth in the second phase). Obviously, this has to be the case since it is governments that are most interested in seeing who has been in contact with COVID-19-positive individuals and identifying exactly who they are, destroying and semblance of individual user privacy.

Basically, under this new "ecosystem", if a user tests positive for COVID-19 and adds that data to the Google/Apple app, users who they came into close contact with over the previous number of days will be notified of their contact. According to Bloomberg, while phase one will be app-based, in the second phase, Google and Apple will add the technology directly into their operating systems; with both companies having a total of 3 billion users, the technology will be non-voluntary for over one-third of the world's total population.

The potential for privacy breaching has some lawmakers in the United States concerned. Here is a letter from the House Freedom Caucus to President Donald Trump regarding that very issue:

At this point, governments are already using aggregated and anonymized data drawn from GPS and cell tower data to inform public health efforts on such issues as the effectiveness of shelter-in-place orders as shown on this release from Google:

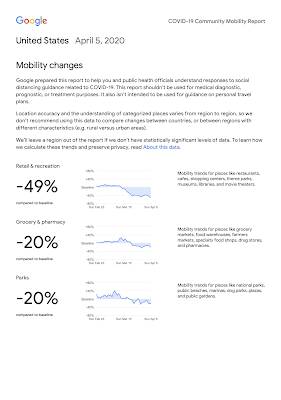

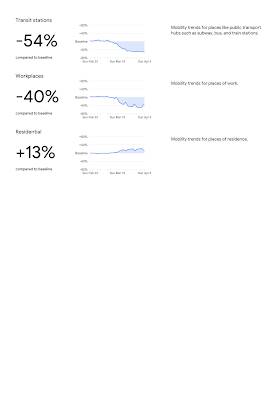

Here is one of Google's COVID-19 Community Mobility Reports for the United States:

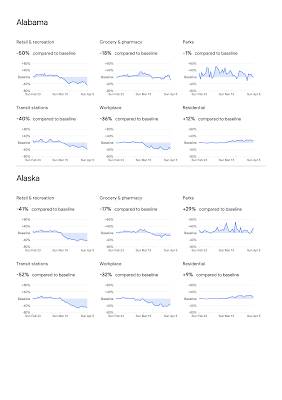

Google also supplies state-level data as shown here:

According to the Electronic Frontier Foundation, there is an inherent problem with aggregated data:

"As a result, companies like Google that produce reports based on aggregated location data from users should release their full methodology as well as information about who these reports are shared with and for what purpose. To the extent they only share certain data with selected “partners,” these groups should agree not to use the data for other purposes or attempt to re-identify individuals whose data is included in the aggregation. And, as Google has already done, companies should pledge to end the use of this data when the need to fight COVID-19 subsides.

For any data sharing plan, consent is critical: Did each person consent to the method of data collection, and did they consent to the use? Consent must be specific, informed, opt-in, and voluntary. Ordinarily, users should have the choice of whether to opt-in to every new use of their data, but we recognize that obtaining consent to aggregate previously acquired location data to fight COVID-19 may be difficult with sufficient speed to address the public health need. That's why it's especially important that users should be able to review and delete their data at any time. The same should be true for anyone who truly consents to the collection of this information. Many entities that hold location information, like data brokers that collect location from ads and hidden tracking in apps, can’t meet these consent standards. Yet many of the uses of aggregated location data that we’ve seen in response to COVID-19 draw from these tainted sources. At the very least, data brokers should not profit from public health insights derived from their stores of location data, including through free advertising. Nor should they be allowed to “COVID wash” their business practices: the existence of these data stores is unethical, and should be addressed with new consumer data privacy laws.

Because aggregation reduces the risk of revealing intimate information about individuals’ lives, we are less concerned about this use of location data to fight COVID-19 compared to individualized tracking. Of course, the choice of the aggregation parameters generally needs to be done by domain experts. As in the Facebook and Google examples above, these experts will often be working within private companies with proprietary access to the data. Even if they make all the right choices, the public needs to be able to review these choices because the companies are sharing the public’s data. For the experts doing the aggregation, there’s often pressure to reduce the privacy properties in order to generate an aggregate data set that a particular decision-maker claims must be more granular in order to be meaningful to them. Ideally, companies would also consult outside experts before moving forward with plans to aggregate and share location data. Getting public input on whether a given data-sharing scheme sufficiently preserves privacy can help reduce the bias that such pressure creates.

Finally, we should remember that location data collected from smartphones has limitations and biases. Smartphone ownership remains a proxy for relative wealth, even in regions like the United States where 80% of adults have a smartphone. People without smartphones tend to already be marginalized, so making public policy based on aggregate location data can wind up disregarding the needs of those who simply don’t show up in the data, and who may need services the most. Even among the people with smartphones, the seeming authoritativeness and comprehensiveness of large scale data can cause leaders to reach erroneous conclusions that overlook the needs of people with fewer resources. For example, data showing that people in one region are traveling more than people in another region might not mean, as first appears, that these people are failing to take social distancing seriously. It might mean, instead, that they live in an underserved area and must thus travel longer distances for essential services like groceries and pharmacies.

In general, our advice to organizations that consider sharing aggregate location data: Get consent from the users who supply the data. Be cautious about the details. Aggregate on the highest level of generality that will be useful. Share your plans with the public before you release the data. And avoid sharing “deidentified” or “anonymized” location data that is not aggregated—it doesn’t work." (my bolds)

It is important that we all remember that Google and Apple still retain all of our personal data, whether they share it or not. We should all keep in mind that, while at this stage, participants can "opt in", such may not be the case in the future and participation may be forced under law.

While Google and Apple claim that the development of their new application is for "the greater good", we must remind ourselves about how the authorities have chiselled away much of our privacy in the post-September 11th world, all in the name of "the greater good".

Click HERE to read more from this author.

You can publish this article on your website as long as you provide a link back to this page.

Be the first to comment