A recent posting on YouTube's Official Blog gives us a sense of the lengths that Google will go to to ensure that we are all protected from what the Google gods deem as "harmful content". While the company admits to censoring content that violates its policies, they claim that they are "preserving the power of an open platform".

Google claims that YouTube is "built on the premise of openness" as shown in this quote from Susan Wojcicki, YouTube's Chief Executive Officer, on YouTube's Creator Blog:

"YouTube is built on the premise of openness. Based on this open platform, millions of creators around the world have connected with global audiences and many of them have built thriving businesses in the process. But openness comes with its challenges, which is why we also have Community Guidelines that we update on an ongoing basis. Most recently, this includes our hate speech policy and our upcoming harassment policy. When you create a place designed to welcome many different voices, some will cross the line. Bad actors will try to exploit platforms for their own gain, even as we invest in the systems to stop them. As more issues come into view, a rising chorus of policymakers, press and pundits are questioning whether an open platform is valuable… or even viable….

A commitment to openness is not easy. It sometimes means leaving up content that is outside the mainstream, controversial or even offensive. But I believe that hearing a broad range of perspectives ultimately makes us a stronger and more informed society, even if we disagree with some of those views. A large part of how we protect this openness is not just guidelines that allow for diversity of speech, but the steps that we’re taking to ensure a responsible community. I’ve said a number of times this year that this is my number one priority. A responsible approach toward managing what’s on our platform protects our users and creators like you. It also means we can continue to foster all the good that comes from an open platform."

Apparently, offensiveness is in the "eyes" of Google and the content that the company determines falls outside of its community policies.

Google has created four principles that outline its approach to responsibility:

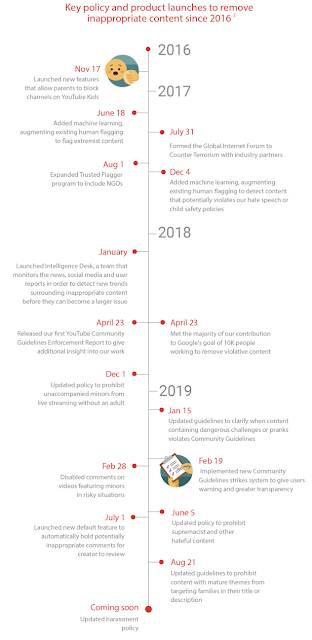

In the current posting on YouTube's Official Blog, Ms. Wojcicki focusses on "Remove". The posting starts outlining improvements that Google/YouTube has made to removing inappropriate content since 2016:

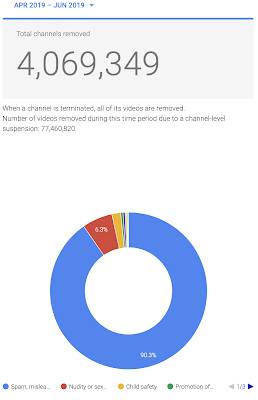

The company claims that it walks a fine line between preserving free expression and protecting and promoting a vibrant community by enforcing its policies. YouTube's quarterly Transparency Report for the months from April to June 2019 saw the following occur:

Since you cannot read the data, here it is with the percentage and total number of channels removed for each reason:

Spam, misleading and scams – 90.3% (3,676,012)

Nudity or sex – 6.3% (254,482)

Child safety – 1.8% (73,504)

Promotion of violence extremism – 0.5% (18,831)

Hateful or abusive – 0.4% (17,818)

Harassment and cyberbullying – 0.4% (14,668)

Other – 0.1% (5,811)

When a channel is removed, all of its videos are removed; with the removal of just over 4 million channels, a total of 77,460,820 videos were removed. Please note that these numbers do not include the channels/videos that were demonetized by Google meaning that the channel creators no longer make money from their YouTube video endeavours.

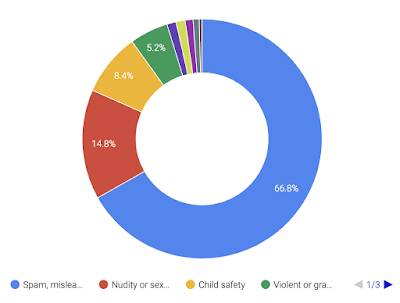

Here is a graphic showing a breakdown of videos removed by reason for their removal:

The vast majority of videos that were removed violated YouTube's "spam, misleading and scams" rules. While YouTube makes a really big deal about removing videos and channels that promote hate, in fact, these videos make up a very small portion of the total number of videos removed in the quarter. In total, because of a breach of YouTube's hate speech rules, the company removed a total of 111,185 videos or 1.2 percent out of the total of 9,015,566 videos that were removed . These videos appeared on more than 17,000 channels, five times the previous number of channels that were removed thanks to YouTube's new rules regarding hate speech.

In addition to the removal of channels and videos, YouTube also uses a combination of people and technology to remove comments that video watchers make. In the quarter between April and June 2019, a total of 537,759,344 comments were removed, 99.3 percent of which were automatically flagged and 0.7 percent of which were flagged by humans. This is nearly twice the number of comments that were removed in the first quarter of 2019 thanks to an increase in hate speech removals.

As I noted above, YouTube is relying heavily on machines to review and flag bad content. Here is a quote from the report:

"In 2017, we expanded our use of machine learning technology to help detect potentially violative content and send it for human review. Machine learning is well-suited to detect patterns, which helps us to find content similar (but not exactly the same) to other content we’ve already removed, even before it’s ever viewed. These systems are particularly effective at flagging content that often looks the same — such as spam or adult content. Machines also can help to flag hate speech and other violative content, but these categories are highly dependent on context and highlight the importance of human review to make nuanced decisions. Still, over 87% of the 9 million videos we removed in the second quarter of 2019 were first flagged by our automated systems.

We’re investing significantly in these automated detection systems, and our engineering teams continue to update and improve them month by month. For example, an update to our spam detection systems in the second quarter of 2019 lead to a more than 50% increase in the number of channels we terminated for violating our spam policies."

Across Google's world, the company has hired in excess of 10,000 people to address content concerns.

It is quite interesting to note that all of this concern about content on Google's YouTube platform appeared after Hillary Clinton lost the election that she was destined to win in November 2016. As users of Google's YouTube product, we have to trust that this massive and highly influential arm of America's technology tyrants is really looking out for our good, protecting us from the evil that lies out there just because they are so concerned about our welfare…but only since 2016.

Click HERE to read more from this author.

You can publish this article on your website as long as you provide a link back to this page.

Be the first to comment